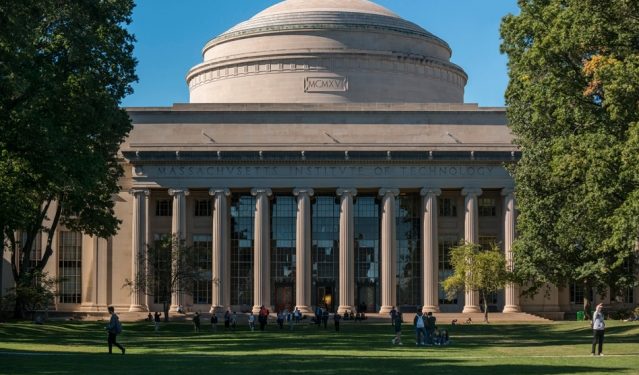

Boston: Researchers at MIT Monday unveiled an artificial intelligence (AI) system that can visualise by touching and feel by seeing, paving the way for robots that can more easily grasp and recognise objects.

While our sense of touch gives us a channel to feel the physical world, our eyes help us immediately understand the full picture of these tactile signals.

Robots that have been programmed to see or feel can’t use these signals quite as interchangeably.

To better bridge this sensory gap, researchers from Massachusetts Institute of Technology (MIT) in the US have come up with a predictive AI that can learn to see by touching, and learn to feel by seeing.

The system can create realistic tactile signals from visual inputs, and predict which object and what part is being touched directly from those tactile inputs.

They used a robot arm with a special tactile sensor called GelSight.

Using a simple web camera, the team recorded nearly 200 objects, such as tools, household products, fabrics, and more, being touched over 12,000 times.

Breaking those 12,000 video clips down into static frames, the team compiled ‘VisGel’, a dataset of over three million visual/tactile-paired images.

“By looking at the scene, our model can imagine the feeling of touching a flat surface or a sharp edge,” said Yunzhu Li, a PhD student and lead author on a new paper about the system.

“By blindly touching around, our model can predict the interaction with the environment purely from tactile feelings,” Li said.

“Bringing these two senses together could empower the robot and reduce the data we might need for tasks involving manipulating and grasping objects,” he said.

Recent work to equip robots with more human-like physical senses use large datasets that are not available for understanding interactions between vision and touch.

The technique gets around this by using the VisGel dataset, and something called generative adversarial networks (GANs).

GANs use visual or tactile images to generate images in the other modality.

They work by using a ‘generator’ and a ‘discriminator’ that compete with each other, where the generator aims to create real-looking images to fool the discriminator.

Every time the discriminator ‘catches’ the generator, it has to expose the internal reasoning for the decision, which allows the generator to repeatedly improve itself, researchers said.

Humans can infer how an object feels just by seeing it.

To better give machines this power, the system first had to locate the position of the touch, and then deduce information about the shape and feel of the region.

The reference images — without any robot-object interaction — helped the system encode details about the objects and the environment.

When the robot arm was operating, the model could simply compare the current frame with its reference image, and easily identify the location and scale of the touch.

For touch to vision, the aim was for the model to produce a visual image based on tactile data, researchers said.

The model analysed a tactile image, and then figured out the shape and material of the contact position, they said.

It then looked back to the reference image to ‘hallucinate’ the interaction.

For example, if during testing the model was fed tactile data on a shoe, it could produce an image of where that shoe was most likely to be touched.

This type of ability could be helpful for accomplishing tasks in cases where there is no visual data, like when a light is off, or if a person is blindly reaching into a box or unknown area, researchers said.

PTI